Indiecade and Oculus Rift vrjam

Hey! It's been two weeks since I've last posted an update and again, it's because I haven't actually done that much work on Bad Things. I've got some boring level editor stuff working, like saving the level using XML serialization, but loading it up isn't quite there yet. I'm still doing fuel cell work too, but that should be over this week. At any rate, I'm going to be working on the Oculus and Indiecade Rift vrjam thing! Also:

But wait! I actually got it to work:

which mirrors that equation. There's also like 60 lines above that, but those aren't important. Oh, and a c# script that goes along with it to manage all the buffer swapping. I ran into a lot of bugs, as you can see in the garbage images and that's one of the problems with implementing magic that you can't figure out yourself because of silly things like "discrete calculus" and "partial differential equations". There's an important condition that you have to be aware of:

|

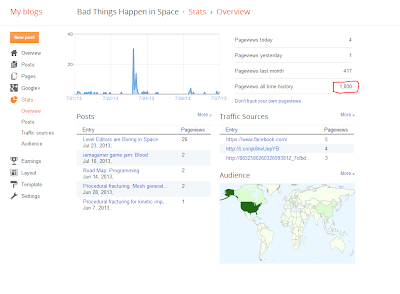

| 1000 blog views! Party! |

That's a mouthful

Yeah, but it's awesome. There's a bunch of prizes, and well, It'll be cool to make some cool stuff for the rift. My idea is to make a game about perspective. People don't usually get to see things at different scales; I think it will be cool to see things from an unusual perspective. Perhaps the scale of an insect will be interesting? There's Pikmin, but that's all I can think of. Also there's...

Echolocation

All you have to do is implement sound wave propagation in a video game and use it to show the environment. This paper holds the secrets of Finite Difference Methods for the wave equation. I have no idea what any of that means (well, maybe a little). First, here's some cool garbage:

|

| Compute shaders are broken. |

But wait! I actually got it to work:

What is this sorcery?

The magic of compute shaders! Essentially, I just implemented the equation in the paper:

This equation is the discrete form of the pressure field created by a wave moving through a medium. The pressure field, along with the the speed of the propagation in the media (c(x,y) in the equation) are all the pieces of information you need to describe the wave system. Here's the final line of my compute kernel:

float next = (2 * current) - previous +(t*source)+ A*(leftinfo + rightinfo - (4 * current) + downinfo + upinfo);

which mirrors that equation. There's also like 60 lines above that, but those aren't important. Oh, and a c# script that goes along with it to manage all the buffer swapping. I ran into a lot of bugs, as you can see in the garbage images and that's one of the problems with implementing magic that you can't figure out yourself because of silly things like "discrete calculus" and "partial differential equations". There's an important condition that you have to be aware of:

I don't know why (what is a CFL?), but if you don't make sure this is satisfied (my time interval was much too large) you get something boring like:

Oh, and also my buffer swapping modulus tricks were totally incorrect. A more explicit if...else structure cleared that up for me.

Pressure fields

If you didn't know, sound is an oscillating pressure field. When you hear sound, your eardrum simply gets pushed in and out really really fast and your brain transforms that into sound qualia. If you were to sample the pressure field (red for positive, green for negative) at a rate of 48 KHz, you could actually get sound out of this simulation. The same people wrote another paper about doing just that, except that it took them something like 3 hours per second to sample enough pressure information. That's not exactly realtime. Luckily, I won't be using it for that. I'm going to try to implement echolocation in my vrjam game. But first I need to figure out how this generalizes to 3D and also how to voxelize the game scene. I'll be working on that over the next week. Here's a final video showing off attenuation and an oscillating pressure field (sound waves!):

The frequency in this video goes from 1 Hz to 25 Hz. Unfortunately, I can't go much higher than 60 Hz because that's half the frame rate. I'm literally setting pressure field values and toggling them positive/negative once the period interval elapses, so I can't do it any faster than half the frame rate (60 Hz on a 120 Hz monitor with vsync enabled).

I hope you guys find this interesting. I was yelling earlier when I went from glitchy squares to actual propagation simulation, I was so excited. It'll be tricky to try and go from 2D to 3D without a paper to give me magic equations, but I think I can do it. I have no idea how to discretize a partial differential equation, but if I have to learn discrete calculus to do it, then so be it.

All the code for this will be up on github in the next few days. I'll make a post when I get the repository set up.

Comments

Post a Comment